Dental AI Essentials: Dr. Robot Will See You Now

Apr 26, 2026

This is Part 1 of Dental AI Essentials: A Practical Series for Dental Teams.

This series is designed to help Canadian dental practices use AI safely, practically, and with confidence - covering everything from privacy and risk to workflows, supervision, and team training.

AI is showing up everywhere in dentistry right now: chart notes, patient communication, insurance details, marketing, training, and even clinical decision support.

Depending on who you ask, AI is either the most powerful productivity tool your practice has ever seen, or a liability waiting to happen.

The truth is somewhere in between, and that is why I am writing this series.

What I am seeing right now is not a lack of interest in AI. It is a lack of clarity.

Dental teams are curious. Vendors are moving quickly. Practice owners are wondering what is safe, what is useful, and what privacy concerns or professional risks come with it.

The question cannot only be: “Can we use AI?”

The better question is: "Are we using AI safely, appropriately, and in a way that protects our patients?", because, adopting AI is not like moving from film to digital X-rays. It is closer to hiring a very fast, very confident, but occasionally wrong intern.

Helpful? Absolutely. Independent? Not quite.

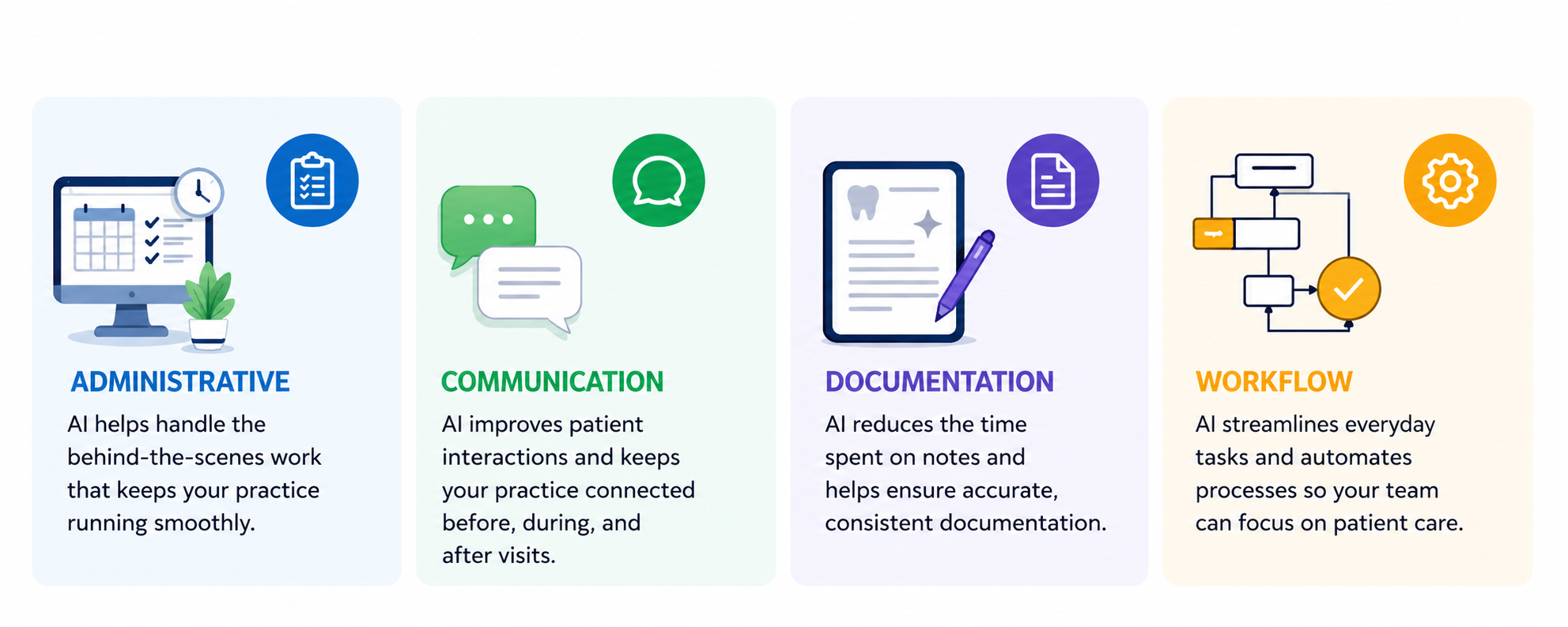

How AI helps dentistry

AI can support dental practices in administrative, communication, documentation, and workflow tasks, but it does not replace professional judgment, clinical responsibility, privacy obligations, and/or team training.

Used well, AI can help dental teams save time and improve consistency.

Used poorly, it can create problems that sound polished, professional, and completely believable - right up until someone realizes the output is wrong, inappropriate, or based on information that should never have been entered into the tool.

What AI can do well in a dental practice

AI is useful when it is treated as a support tool.

For example, AI may help dental teams:

- Draft patient-friendly explanations

- Summarize general educational content

- Create first drafts of insurance narratives

- Brainstorm marketing ideas

- Build checklists and templates

- Simplify complex instructions into plain language

- Support internal training materials

All this can be valuable in a busy practice.

A dental office is full of repetitive writing, time constraints, and communication tasks. If AI helps reduce some of that load, then it is absolutely worth exploring.

But the key word is support: AI should help the team think, and not replace the team’s thinking.

What AI cannot do for you

AI does not understand your patient the way your clinical team does.

It does not know the full chart context unless someone provides it. It does not carry professional responsibility. It does not understand consent the way a regulated health professional must. It does not know your provincial privacy obligations unless your team has built those obligations into your process.

AI may produce answers that sound clear and confident even when they are incomplete, outdated, biased, or wrong. Regulatory bodies highlight the significance of trustworthiness characteristics such as validity, reliability, safety, security, accountability, transparency, explainability, privacy enhancement, and fairness as important considerations when organizations use AI systems.[1]

That matters because dental practices do not operate in a low-risk content environment. You handle patient information, health records, billing details, images, treatment plans, and communications that patients may rely on.

If AI helps draft something, a trained human still needs to review it.

The biggest mistake is treating AI like a magic button

Most practices do not get into trouble because someone intentionally misuses AI.

They get into trouble because no one has laid out the rules.

A team member tries a tool because it seems helpful. Another person pastes in a note to make it sound more professional. Someone else uses an AI-generated patient explanation because it reads nicely. No one is trying to create risk but, without a strategy, the practice may not know:

- What tools are approved

- Whether patient information can be entered

- Where the data goes

- Whether the tool uses prompts for training

- Who must review AI-generated content

- What must be documented

- When AI should not be used at all

That is not an AI problem. That is a governance problem wearing a very shiny hat.

Why this matters in Canada

Canadian dental practices need to look at AI through a privacy lens.

Depending on the province and the type of information involved, practices may need to consider federal privacy law such as PIPEDA, provincial private-sector privacy laws, and provincial health privacy laws. The Office of the Privacy Commissioner of Canada explains that PIPEDA’s fair information principles include accountability, consent, limiting collection, limiting use and disclosure, accuracy, safeguards, and openness.[2]

In plain language: if your practice collects, uses, discloses, stores, or transfers patient information, you remain responsible for how that information is handled.

That responsibility does not disappear because a tool is popular, convenient, or labelled “AI.”

Before a dental practice uses AI with patient-related information, it should understand the tool’s privacy terms, data storage, access controls, retention, training use, and whether the use fits the purpose for which the patient information was collected.

A simple dental example

A team member wants to use AI to make a treatment explanation easier for a patient to understand.

That sounds reasonable.

But the risk depends on how it is done.

Lower-risk approach:

- Use general, non-identifying information

- Ask AI to explain a general concept in plain language

- Have the dentist or appropriate team member review the final wording

- Make sure the explanation matches the patient’s actual situation

Higher-risk approach:

- Paste the patient’s chart note into an unapproved public AI tool

- Include identifying details

- Send the AI-generated answer without clinical review

- Save no record of what was changed or approved

Same intention. Very different risk.

This is why training matters.

What dental practices considering using AI should do next

Before your practice goes any further with AI, start with a short practical review.

Ask:

- What are we using AI for now?

- Which tools are approved?

- Are staff allowed to enter patient information?

- Who reviews AI-generated work before it is used?

- Do we understand the privacy and data terms of the tool?

- Have we trained the team on safe AI use?

If the answer to several of these is “not sure,” that is not a failure.

It is your starting point.

Recommended education

For dental teams that want clear, practical guidance, Myla’s AI Essentials training helps practices understand AI risks, privacy considerations, safe workflows, and team responsibilities.

Learn more here: https://mylatraining.com/training

The goal is not to scare teams away from AI. The goal is to help them use it with better judgment, better boundaries, and better documentation.

FAQ

Is AI safe to use in a dental practice?

Yes, when it is used with clear rules, proper review, privacy safeguards, and team training. AI should support dental work, not replace professional judgment.

Can AI replace clinical judgment?

No. AI can support certain tasks, but clinical judgment, patient communication, diagnosis, treatment planning, and professional responsibility remain with the dental team.

Can staff enter patient information into AI tools?

Not unless the practice has reviewed and approved the tool, understands the privacy implications, and confirms that the use complies with applicable privacy obligations and practice policies.

What is the biggest AI risk for dental practices?

One of the biggest risks is untrained use: staff using convenient tools without clear rules about patient data, review, documentation, and accountability.

Do dental teams need AI training?

Yes. Training helps staff understand what AI can do, where it can go wrong, and how to use it safely in real dental workflows.

Summary

AI can be genuinely helpful in dentistry, but it is not a magic button and it is not a replacement for human judgment.

Think of AI as a capable but overconfident intern. It can draft, summarize, organize, and suggest. But it still needs supervision, boundaries, review, and documentation.

The practices that benefit most from AI will not necessarily be the ones that adopt it first. They will be the ones that adopt it thoughtfully.

What is next in this series?

In Part 2 of Dental AI Essentials: A Practical Series for Dental Teams, we will look at one of the most important AI risks for dental practices:

Dental AI Essentials: Please Don’t Paste Mrs. Jones’ Chart Into ChatGPT

We will cover privacy, consent, patient information, and why dental teams need clear rules before using AI tools with anything that could identify a patient.

About the Author

Anne Genge is the founder of Myla Training Corp, Canada’s dental-specific AI, privacy, and cybersecurity training company. She is a national speaker and educator who helps dental teams use technology safely, protect patient information, and build practical systems that work in real clinical environments.

Think of AI as a capable but overconfident intern. It can draft, summarize, organize, and suggest. But it still needs supervision, boundaries, review, and documentation.

The practices that benefit most from AI will not necessarily be the ones that adopt it first. They will be the ones that adopt it thoughtfully.

Learn More. Worry Less. Stay Safe.

Sources

- NIST - AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

- Office of the Privacy Commissioner of Canada - PIPEDA Fair Information Principles: https://www.priv.gc.ca/en/privacy-topics/privacy-laws-in-canada/the-personal-information-protection-and-electronic-documents-act-pipeda/p_principle/

- Health Canada - Pre-market guidance for machine learning-enabled medical devices: https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/application-information/guidance-documents/pre-market-guidance-machine-learning-enabled-medical-devices.html

- Canadian Centre for Cyber Security - Generative artificial intelligence: https://www.cyber.gc.ca/en/guidance/generative-artificial-intelligence-ai-itsap00041

- Health Canada, FDA, and MHRA - Transparency for machine learning-enabled medical devices: https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/transparency-machine-learning-guiding-principles.html

Train Your Team to Spot AI Risks Today