Healthcare AI Scribes Can Hallucinate: Ontario Auditor General

May 13, 2026

Healthcare AI Scribes Can Hallucinate. Is Your Dental Team Trained to Catch It?

Imagine this.

A dental team starts testing an AI tool to help summarize patient conversations. A few seconds after the visit, the tool produces a polished note. It sounds professional, is neatly organized, and it may even save someone a pile of paperwork.

But then a team member notices something odd.

The note includes a medication that was never mentioned, it leaves out an important patient concern, or it suggests a follow-up step that was never discussed.

That is the part dental teams need to understand.

AI can be helpful. But helpful is not the same as final.

Dental practices do not need to avoid AI completely. They do need dental AI training before AI tools become part of everyday practice workflows.

Recent findings from the Ontario Auditor General’s 2026 report on AI use in the Ontario government found that AI scribe systems tested during procurement produced inaccurate, incomplete, and hallucinated information. In the testing of 20 approved AI scribe vendors, all 20 showed at least one type of inaccuracy in generated notes. The report also found that 9 of 20 systems fabricated information, 12 of 20 captured a different drug than what was prescribed, and 17 of 20 missed key details about mental health issues in at least one test.

For dental practices, the lesson is not “never use AI.” We need to adopt AI can draft, but humans must decide.

Why This Matters for Dental Practices

Most dental practices are not starting with full clinical AI scribes.

They are more likely experimenting with AI for everyday tasks such as:

- patient messages

- admin support

- marketing drafts

- call summaries

- treatment-plan explanations

- chart-note drafts

- insurance narratives

- policy writing

- team training materials

That can feel lower risk than a clinical scribe. Sometimes it is. But the same types of problems can still appear.

AI tools can produce details that sound right but are not supported. They can leave out important context. They can create language that sounds more certain than it should. They can also raise privacy concerns if team members enter patient information into tools that have not been reviewed or approved.

A polished AI answer can be the most difficult kind of wrong to catch because it does not look messy. It looks finished.

What the Recent Ontario AI Scribe Story Tells Us

The Ontario Auditor General’s May 2026 report reviewed AI use in the Ontario government and included findings about AI scribe systems intended for use by health-care professionals. The report found that during the AI scribe procurement process, evaluators noted inaccuracies in generated medical notes from most approved vendors. These included incorrect information, AI hallucinations, and incomplete information.

The more detailed findings are especially useful for dental leaders because they show the types of errors teams should be trained to catch.

The report found that:

- 9 of 20 approved AI scribe systems fabricated information or added treatment suggestions not mentioned in simulated recordings.

- 12 of 20 captured a different drug than the one prescribed.

- 17 of 20 missed key details about patients’ mental health issues in at least one test.

The issue is not only that AI made mistakes. We already know AI can make mistakes. The bigger issue is whether health-care organizations have strong enough safeguards to catch those mistakes before they affect patient care, records, privacy, or trust.

That is where dental practices need to pay close attention.

“The Provider Will Review It” Is Not a Complete Safety Plan

One common response to AI scribe concerns is that the clinician is supposed to review and authorize the note.

That matters. But it is not enough on its own.

Review only works when the reviewer has enough time, enough training, and a clear process for what to check. A rushed approval at the end of a busy day is not the same thing as meaningful oversight.

The College of Physicians and Surgeons of Ontario says AI is meant to complement clinical care, not replace medical expertise. CPSO also says physicians need to review AI-generated information for accuracy and completeness and remain accountable for their use of AI tools, including AI tools used for medical documentation.

Dental practices should apply the same practical principle.

If AI drafts a note, email, insurance narrative, patient instruction, treatment summary, or internal policy, the practice still owns the final output.

The Human in the Loop Has to Be Real

In health care, “human in the loop” cannot be ceremonial.

It cannot mean someone clicks approve because the note looks clean. It cannot mean a team member copies AI text into a patient record without checking it against the actual appointment, chart, consent, diagnosis, treatment plan, and clinical judgment.

The human reviewer needs to know what to look for.

That includes checking for:

- facts that were never said

- missing clinical details

- wrong medications or conditions

- invented treatment recommendations

- privacy-sensitive information

- biased or inappropriate wording

- unclear statements that sound more certain than they are

- content copied into the wrong patient context

AI can help organize information. It should not be treated as the final authority on clinical facts, privacy decisions, patient records, or professional judgment.

Can AI Tools Improve Through Real-World Use?

Yes, AI tools can improve through careful real-world use.

But that only happens when use is controlled, monitored, and supported by a feedback process.

A practice is not improving AI safety if team members are simply experimenting without rules. That may only normalize poor notes, unsafe shortcuts, or privacy risks.

The Information and Privacy Commissioner of Ontario’s AI scribe guidance says health-sector organizations should assess vendors and AI systems, set contractual safeguards, monitor AI systems over time, and build governance and accountability processes to protect personal health information and support compliance with Ontario health privacy law.

In plain language:

AI gets safer when humans use it carefully. It gets riskier when humans assume it is already safe.

What Dental Teams Should Do Before Using AI

Before a dental team uses AI for patient-related or business-critical work, the practice should be able to answer a few basic questions.

1. What AI tools are approved for use?

Team members should know which tools are allowed and which are not.

That includes tools used for writing, summarizing, recording, transcribing, translating, image analysis, patient communication, insurance support, and admin work.

If the practice has not approved a tool, team members should not enter patient information into it.

2. What information must never be entered into public AI tools?

Dental teams need clear examples.

Patient names, chart details, appointment notes, radiographs, medical history, financial information, insurance details, photos, and identifying case details can all create privacy concerns if entered into the wrong tool.

This is where practical training for dental teams matters. People need more than a policy. They need realistic examples from dental workflows.

3. When is patient consent required?

AI scribes and similar tools may involve recording or processing patient conversations. CPSO guidance for physicians says patients need to be informed about how AI will be used, and consent is needed before recording conversations using AI.

Dental practices should confirm consent requirements with their regulator, privacy officer, legal advisor, or provincial privacy guidance before using AI tools that record, transcribe, or process patient encounters.

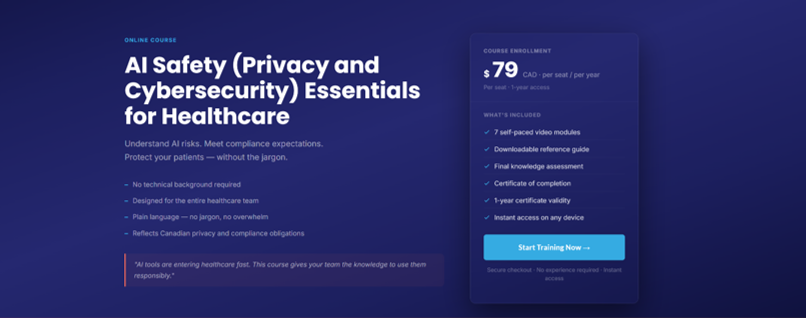

TIP: AI safety training like Myla's AI Essentials for Dental Teams course includes how to create policies, consent, and how to talk to patients about AI.

4. Who reviews AI-generated content?

Every practice should know who is responsible for reviewing AI-generated content before it is saved, sent, submitted, or copied into a record.

The answer may depend on the content.

For example:

- A dentist or authorized clinician should review clinical notes and treatment-related content.

- A privacy officer or manager may need to review privacy-sensitive workflows.

- An office manager may review admin templates or patient communication drafts.

- Marketing drafts should be checked for accuracy, privacy, and professionalism.

5. How are AI errors documented and reported?

If AI produces a wrong or risky output, the practice should not just fix it and move on.

There should be a simple way to document:

- what happened

- which tool was used

- what information was involved

- whether patient information was affected

- how the error was corrected

- whether the vendor or privacy officer needs to be notified

- whether the workflow should be paused or changed

That does not need to be complicated. It does need to be clear.

Common Mistakes Dental Teams Should Avoid

Mistake 1: Treating AI output as finished because it sounds professional

AI can write with confidence even when it is wrong.

A clear sentence is not the same as a correct sentence.

Mistake 2: Letting each team member choose their own tools

Unapproved AI tools can create privacy, security, and documentation problems.

A practice should have one clear list of approved tools and approved uses.

Mistake 3: Training only the dentist or owner

AI risk is not limited to the dentist.

Front desk, treatment coordinators, hygienists, assistants, managers, privacy officers, and marketing support staff may all use AI in different ways.

That means the whole team needs shared rules.

Here's AI Safety Training for all dental team members: https://www.mylatraining.com/AI-Privacy-and-Cybersecurity-Essentials-2026S

Mistake 4: Assuming vendor approval removes practice responsibility

Vendor review matters. But it does not replace practice-level oversight.

The Ontario Auditor General found gaps in the AI scribe vendor evaluation process, including concerns about submitted security documents, threat risk assessments, privacy impact assessments, and bias evaluation.

Dental practices should still ask questions about privacy, security, accuracy, consent, storage, access, and vendor accountability before adopting an AI tool.

A Practical Rule for Dental AI Use

Here is the simplest rule:

AI can draft, but humans must decide.

That means AI may help write, summarize, organize, or suggest.

But humans must verify, correct, approve, and take responsibility for the final result.

Use AI as an assistant, not as an authority.

How Myla Helps

This is exactly why dental teams need dental AI training before AI tools become part of everyday practice.

Myla’s AI Safety for Dental Teams training helps dental professionals understand where AI can help, where it can go wrong, and what safeguards need to be in place before patient information, chart notes, treatment plans, insurance narratives, or patient communications are affected.

The goal is not to make every team member an AI engineer. Please, no one needs another job title by Friday.

The goal is to help the whole team recognize risky AI use, protect patient information, question suspicious outputs, and know when a human needs to slow down and verify.

Explore Myla’s dental AI training at https://www.mylatraining.com/training.

Frequently Asked Questions

Should dental practices stop using AI tools?

No.

The best approach is to pause unplanned or unsafe use, then introduce AI with training, clear policies, privacy safeguards, approved tools, and human review.

AI can be useful in dental practices, but it should not be used casually with patient information or clinical records.

Are AI scribes safe for health care?

AI scribes may be useful, but they require careful review, vendor assessment, privacy safeguards, patient consent where applicable, and ongoing monitoring.

The Ontario Auditor General’s 2026 report found inaccuracies in AI scribe systems tested during procurement, including hallucinated information, incorrect information, and incomplete information.

What is an AI hallucination?

An AI hallucination happens when an AI system generates information that sounds plausible but is false, unsupported, or not actually present in the source material.

The Ontario Auditor General’s report defines AI hallucinations as outputs that are made up, fabricated, or not based on the actual data provided to the AI system.

Who is responsible if AI creates an incorrect note?

The responsible provider or practice cannot outsource accountability to the AI tool.

CPSO guidance for physicians says physicians are accountable for their use of AI tools and need to review AI-generated information for accuracy and completeness.

Dental practices should apply the same practical standard: AI may assist, but the practice is responsible for what is saved, sent, or relied on.

What should dental teams be trained on before using AI?

Dental teams should understand:

- privacy rules

- safe prompting

- patient information risks

- AI hallucinations

- review requirements

- approved versus unapproved tools

- vendor considerations

- consent considerations

- documentation expectations

- how to report concerns

This is especially important for teams using AI in patient communication, charting, treatment explanations, insurance narratives, or admin workflows.

Summary

AI is not going away from dental practice.

That does not mean every tool belongs in your workflow today. It means dental teams need a safe, practical way to decide what belongs, what does not, and what must be checked before AI output becomes part of patient care or practice records.

The smartest dental AI strategy is not blind trust.

It is trained use, clear rules, careful review, and documented team readiness.

Before your team adopts AI tools for notes, admin, patient communication, marketing, or treatment support, make sure they know how to use them safely.

Explore Myla’s dental AI training at https://www.mylatraining.com/training.

For practices that need completion records, certificates, or proof of training, learn more about documented team training at https://www.mylatraining.com/certification.

Sources / References

- Office of the Auditor General of Ontario

Source: Use of Artificial Intelligence in the Ontario Government, Special Report 2026

Claim supported: Ontario’s 2026 special report reviewed AI use in the Ontario government and reported AI scribe procurement testing concerns, including inaccurate, incomplete, and hallucinated information in AI-generated notes. - Office of the Auditor General of Ontario

Source: Use of Artificial Intelligence in the Ontario Government — Special Audits page

Claim supported: Source page for the Auditor General’s 2026 AI report and related report materials. - College of Physicians and Surgeons of Ontario

Source: Using Artificial Intelligence in Clinical Practice

Claim supported: AI is meant to complement clinical care, not replace medical expertise; physicians need to review AI-generated information for accuracy and completeness; physicians remain accountable for AI use; patients should be informed when AI is used, and consent is needed before recording conversations using AI. - Information and Privacy Commissioner of Ontario

Source: AI Scribes: Key Considerations for the Health Sector

Claim supported: Health-sector organizations should assess vendors and AI systems, use contractual safeguards, monitor AI systems over time, and maintain governance and accountability processes to protect personal health information.

About Anne Genge

Anne Genge is the founder of Myla Training Corp, a Canadian dental AI, privacy, and cybersecurity training company. She helps dental practices understand technology risk in plain language and train their teams to recognize everyday privacy, cybersecurity, and AI-related risks.

Anne is a national speaker and educator on dental cybersecurity, privacy, and AI risk. Through Myla, she creates practical training for dental teams that supports safer workflows, better documentation, and more confident decision-making.

Learn More. Worry Less. Stay Safe.™

Train Your Team to Spot AI Risks Today