Dental AI Essentials: AI Hallucinations in Dentistry: Why ChatGPT Can Be Wrong

ai accuracy ai hallucinations ai risks dental ai dental ai safety dental ai security dental ai training dental training generative ai May 16, 2026

AI Hallucinations in Dentistry: Why ChatGPT Can Be Wrong

Dental AI Essentials Series

This article is part 3 of the Dental AI Essentials Series, a 6-part series designed to help Canadian dental practices use AI safely, practically, and with confidence.

Series Articles

- AI in Dentistry: What It Can (and Can’t) Do for Dental Practices

- AI and Dental Privacy in Canada

- AI Hallucinations in Dentistry

- AI Supervision in Dental Practice

- AI in the Dental Practice: What It Changes

- The SAFE Way to Use AI in Dentistry

Can AI Tools Like ChatGPT Make Mistakes in Dental Practice?

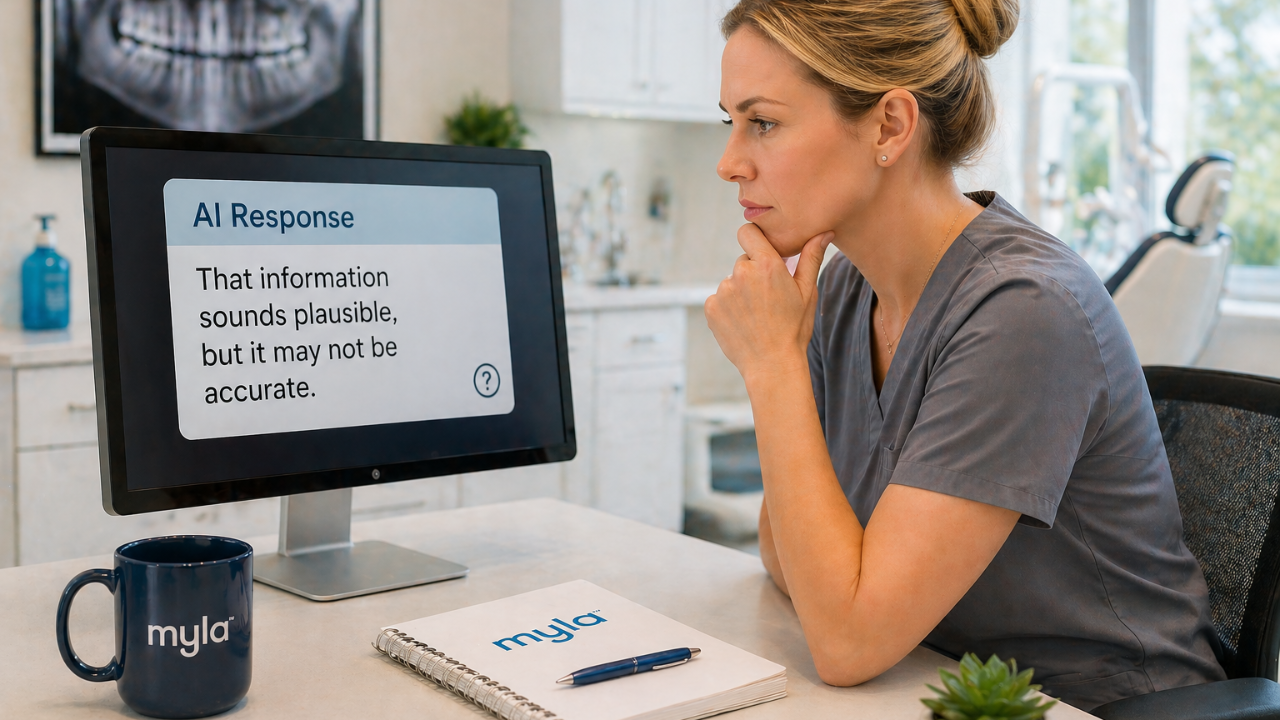

Yes. And sometimes those mistakes sound completely correct.

As AI becomes more common in dental practices across Canada, many dental teams are using tools like ChatGPT for patient communication, documentation, and administrative support. But one of the biggest risks is often misunderstood:

AI can sound confident, even when it is wrong.

In Part 1, we explained how AI works. In Part 2, we covered why patient information should not be entered into AI tools without proper approval and safeguards.

Now we address another critical risk: AI hallucinations.

What Is an AI Hallucination?

An AI hallucination occurs when an AI system generates information that is:

- Incorrect

- Unsupported

- Incomplete

- Misleading

- Or entirely made up

The risk is not just that AI makes mistakes—it is that those mistakes are often written clearly, professionally, and confidently.

Common examples include:

- Made-up facts

- Incorrect clinical explanations

- Fake or misleading citations

- Overgeneralized treatment statements

- Incomplete summaries

- Policy or legal statements that sound correct but are not

In dental practice, this matters because errors can affect:

- Patient communication

- Documentation

- Insurance narratives

- Marketing content

- Clinical support workflows

Why AI Sounds So Convincing

AI tools are designed to produce fluent, well-structured responses.

That often includes:

- Smooth wording

- Confident tone

- Professional formatting

- Clear explanations

But good writing is not the same as accuracy.

In a busy dental practice, a polished AI response can look ready to use—when it is actually just a first draft.

A useful way to think about it:

AI is like an overconfident intern, fast, articulate, and occasionally wrong.

Where Hallucinations Show Up in Dental Workflows

AI errors are not limited to clinical scenarios. They can appear in everyday tasks such as:

- Patient education materials

- Treatment explanations

- Insurance narratives

- Claim appeal letters

- Website and social media content

- Office policies

- Staff training documents

- Email templates

- Meeting summaries

Some mistakes are minor. Others can lead to:

- Patient confusion

- Documentation errors

- Compliance issues

- Loss of trust

Why Clinical Use Requires Extra Caution

There is a major difference between:

- Drafting a reminder email

- Supporting a clinical decision

Clinical AI tools require significantly higher levels of review and validation.

Organizations such as the American Dental Association and Health Canada have emphasized the importance of:

- Safety

- Accuracy

- Transparency

- Clinical validation

Key Point

Not all AI tools are equal.

A general chatbot, administrative tool, and clinical system each carry different levels of risk and responsibility.

Example: When AI Sounds Right, But Isn’t

A treatment coordinator asks AI:

“Explain why a patient might need a crown after a root canal.”

The response sounds excellent but includes this statement:

“A crown will prevent the tooth from breaking.”

That statement is too absolute.

A safer, reviewed version would be:

“A crown may be recommended to help protect and strengthen the tooth after root canal treatment, depending on the tooth, remaining structure, and clinical assessment.”

This is not about avoiding AI. It is about reviewing AI-generated content before using it.

A Simple Review Rule for Dental Teams

Before using AI-generated content, ask:

Is it accurate?

Does it match clinical knowledge?

Is it appropriate?

Is it suitable for this patient or situation?

Is it allowed?

Does it follow your practice’s AI and privacy policies?

Is it reviewed?

Has the appropriate person approved it?

Fast output should not become unreviewed output.

Why Training Matters

Most AI mistakes are not dramatic, they are subtle.

Examples include:

- A patient explanation that overpromises results

- A policy summary that is slightly incorrect

- A marketing claim that was not reviewed

Over time, these small errors create risk.

Dental teams need to understand:

- What AI can and cannot do

- What requires review

- Who is responsible for approval

- When to escalate concerns

Failing to review AI-generated content can lead to:

- Patient complaints

- Compliance issues

- Reputational risk

How Dental Practices Should Respond

Before integrating AI into daily workflows, create a simple verification system.

Start with:

Identify where AI is used

Administrative tasks, marketing, patient communication, and documentation all carry different levels of risk.

Separate low-risk and high-risk uses

Not all tasks require the same level of review.

Define review rules

Clarify who approves what.

Avoid blind copy-and-paste

AI output should never go directly into patient-facing content without review.

Train the team

Focus on hallucinations, privacy, and safe use.

Document your process

Keep it simple but consistent.

Training for Dental Teams

Safe AI use in dental practice requires training—not assumptions.

Myla’s AI Essentials training for dental teams covers:

- AI risks and hallucinations

- Privacy and compliance

- Safe workflows

- Review and approval processes

👉 Learn how to safely use AI in your dental practice:

https://mylatraining.com/training

FAQ: AI Hallucinations in Dental Practice

What is an AI hallucination?

An AI hallucination is when an AI system generates incorrect or misleading information that sounds believable.

Can AI hallucinations affect dental practices?

Yes. They can impact patient communication, documentation, marketing, and clinical support content.

Does a polished AI response mean it is accurate?

No. Professional wording does not guarantee correctness.

Should AI be used for clinical decisions?

Clinical use requires strict oversight. AI should support—not replace—clinical judgment.

What is the safest approach?

Treat AI output as a draft and review it carefully before use.

Summary

AI hallucinations are dangerous because they do not always look like mistakes.

They often appear:

- Polished

- Confident

- Ready to use

For dental teams, the safest approach is simple:

Use AI as a drafting tool—not a decision-maker.

Next in the Series

AI Supervision in a Dental Practice

Learn how to build simple systems that keep AI helpful without creating unnecessary risk.

About the Author

Anne Genge is the founder of Myla Training Corp, a Canadian provider of AI, privacy, and cybersecurity training for dental teams.

She is a national speaker and educator who helps dental teams use technology safely, protect patient information, and build practical systems that work in real clinical environments.

Learn More. Worry Less. Stay Safe.™

References

- National Institute of Standards and Technology (NIST).

AI Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework - Office of the Privacy Commissioner of Canada.

Privacy Guidance for Generative AI.

https://www.priv.gc.ca/en/privacy-topics/technology/artificial-intelligence/ - Health Canada.

Pre-market Guidance for Machine Learning-Enabled Medical Devices.

https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/application-information/guidance-documents/pre-market-guidance-machine-learning-enabled-medical-devices.html - American Dental Association.

Artificial Intelligence in Dentistry.

https://www.ada.org/resources/research/science-and-research-institute/oral-health-topics/artificial-intelligence-in-dentistry - Canadian Centre for Cyber Security.

Generative Artificial Intelligence (AI).

https://www.cyber.gc.ca/en/guidance/generative-artificial-intelligence-ai-itsap00041

Train Your Team to Spot AI Risks Today

Categories

All Categories ai accuracy ai essentials ai hallucinations ai in dentistry ai policy ai privacy ai privacy training for dental teams ai risks ai risks in dental practices ai safety training ai training canadian privacy law cybersecurity cybersecurity education cybersecurity training for canadian dental practices cybersecurity training for dental teams dental ai dental ai privacy dental ai safety dental ai security dental ai training dental cybersecurity dental cybersecurity certification dental cybersecurity training dental cybersecurity training canada dental privacy dental privacy training dental privacy training canada dental security awareness training dental team dental team certification dental teams dental training generative ai hiring dental staff myla pause protocol patient information phipa dental privacy training phishing practice management privacy ransomware dental practice safe ai safe ai use safe ai use in dental offices safe dental ai safe framework security alerts staff trainingRecent Posts