Dental AI Essentials: AI Supervision in a Dental Practice: How to Review and Approve AI Output

May 01, 2026

AI Supervision in a Dental Practice: How to Review and Approve AI Output

Dental AI Essentials Series

This article is part 4 of the Dental AI Essentials Series, a 6-part series designed to help Canadian dental practices use AI safely, practically, and with confidence.

Series Articles

- AI in Dentistry: What It Can (and Can’t) Do for Dental Practices

- AI and Dental Privacy in Canada

- AI Hallucinations in Dentistry

- AI Supervision in Dental Practice

- AI in the Dental Practice: What It Changes

- The SAFE Way to Use AI in Dentistry

Who Is Responsible for Checking AI-Generated Content in a Dental Practice?

The answer is simple: the practice—not the AI.

As AI becomes more common in dental practices across Canada, many dental teams are using tools like ChatGPT to support communication, documentation, and workflows. But once AI starts contributing to real work, a critical question arises:

Who reviews AI output before it becomes official?

In Part 1, we explained how AI works. In Part 2, we covered why patient information must be protected. In Part 3, we explored why AI can sound correct even when it is wrong.

Now we focus on what ties all of this together: supervision.

Why AI Supervision Matters

AI tools can support dental workflows—but they do not carry professional accountability.

The practice does.

If AI helps draft:

- Patient communication

- Insurance narratives

- Clinical summaries

- Policies or training content

…the responsibility for accuracy, privacy, and appropriateness still belongs to the dental team.

AI does not:

- Sign the chart

- Respond to complaints

- Explain decisions to regulators or patients

People do.

Failing to supervise AI-generated content can lead to:

- Patient misunderstandings

- Documentation errors

- Compliance risks

- Reputational damage

Treat AI Output as Draft—Not Final

AI-generated content should always be treated as draft work.

A draft is not a decision. A suggestion is not a clinical judgment.

Even when the output sounds polished, it still requires review before use in:

- Patient-facing communication

- Documentation

- Claims and insurance submissions

- Marketing content

- Policies and procedures

Without clear review rules, AI can quietly shift from a helpful tool to unmanaged risk.

Supervision Is Not About Mistrust

Supervision does not mean your team has done something wrong.

It means your practice is building a safe and consistent workflow.

Dental teams already understand this:

- New staff are trained before working independently

- Clinical decisions are reviewed

- Documentation follows defined standards

AI needs the same structure—not because it is human, but because it can produce finished-looking work that has not been verified.

Use a Simple Review Ladder

Not all AI use carries the same level of risk.

A practical approach is to create a review ladder based on risk level.

Low-Risk Use

Examples:

- Brainstorming internal ideas

- Drafting general announcements

- Organizing non-patient notes

Review

Basic staff review for tone and accuracy.

Moderate-Risk Use

Examples:

- Patient education drafts

- Insurance narratives

- Website content

- Internal policies

Review

Reviewed by the appropriate team member, such as a dentist, manager, or trained lead.

High-Risk Use

Examples:

- Clinical decision-support tools

- Diagnostic or imaging AI

- Patient-specific recommendations

- Any workflow involving identifiable patient data

Review

- Dentist or designated authority review

- Confirm the tool is approved

- Document review when appropriate

Key Principle

The greater the impact on patient care, privacy, or decision-making, the greater the supervision required.

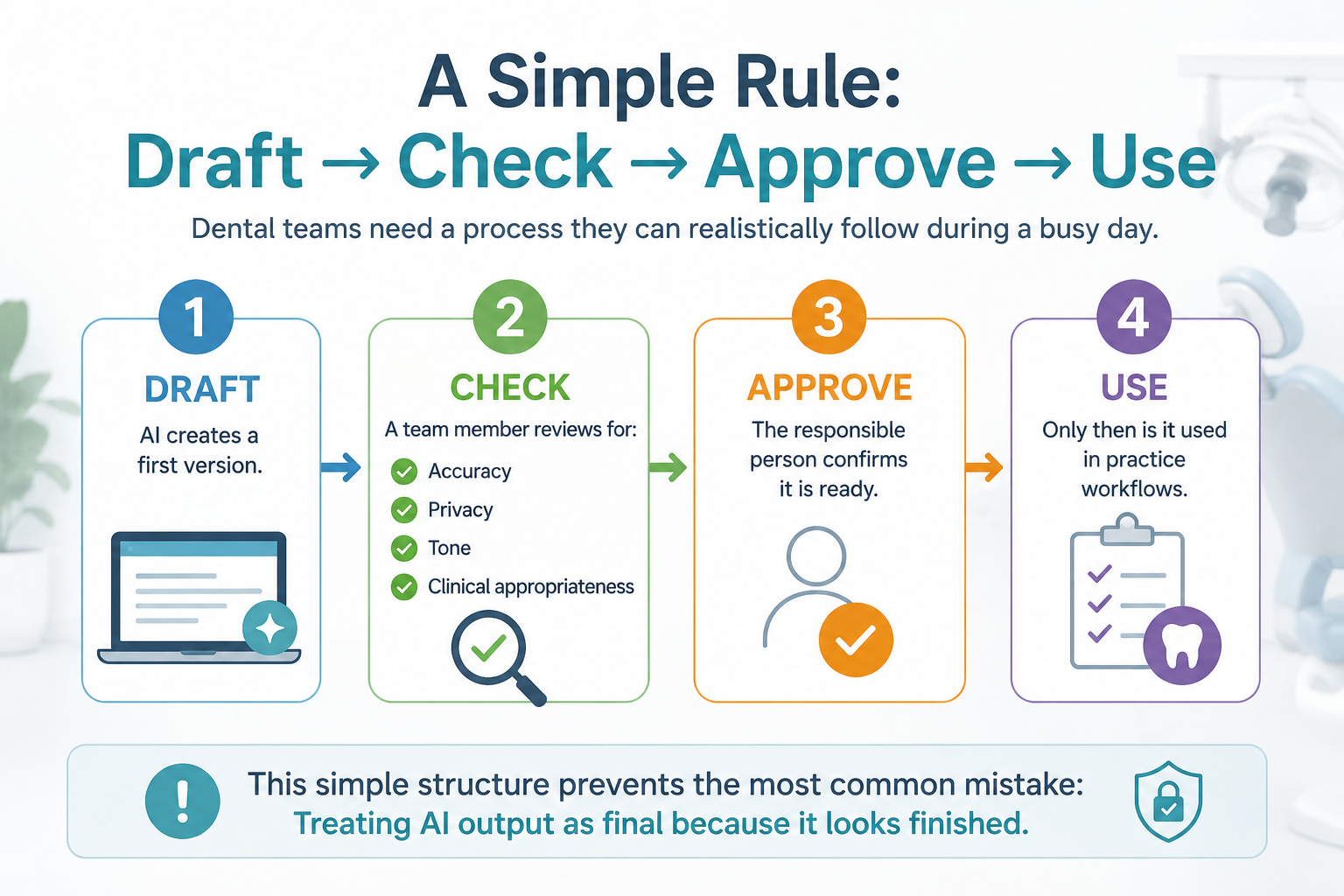

Dental teams need a process they can realistically follow during a busy day.

Draft

AI creates a first version.

Check

A team member reviews for:

- Accuracy

- Privacy

- Tone

- Clinical appropriateness

Approve

The responsible person confirms it is ready.

Use

Only then is it used in practice workflows.

This simple structure prevents the most common mistake:

Treating AI output as final because it looks finished.

Example: AI-Assisted Insurance Narrative

A team member uses AI to draft a claim narrative.

The result is well written—but includes details not supported by the chart.

That creates risk.

A safer workflow looks like this:

- AI drafts the structure

- Unsupported details are removed

- A dentist or reviewer confirms accuracy

- The final version follows documentation policy

AI supports the process—but the dental team remains responsible.

What Should Be Documented?

Not every AI task needs documentation—but some do.

Consider documenting:

- Approved AI tools

- Allowed and prohibited uses

- Who can use each tool

- Whether patient data is permitted

- Review and approval responsibilities

- Staff training completion

- Incidents or errors involving AI

- Vendor agreements (if applicable)

Documentation is not about creating extra work.

It is about demonstrating that your practice has:

- Assessed risk

- Trained the team

- Established safeguards

This is especially important under Canadian privacy expectations, where accountability must be demonstrated—not assumed.

Should Patients Be Told AI Is Used?

This depends on:

- How AI is used

- Whether patient data is involved

- Whether AI affects decisions

Canadian privacy regulators emphasize transparency when personal information is used, especially when it affects individuals.

Practical Approach

Practices should clearly understand:

- Where AI is used

- Whether it impacts patient care or communication

- Whether disclosure or consent may be required

Higher-risk use cases may require additional legal or regulatory guidance.

Why Training Matters

Supervision only works if your team knows what to look for.

Without training:

- Errors are missed

- Overconfidence increases

- Policies are not followed consistently

Dental teams need to understand:

- Approved versus unapproved tools

- What patient information can be used

- How to identify unreliable AI output

- When review is required

- Who is responsible

Well-trained teams use AI safely—and confidently.

How Dental Practices Should Implement Supervision

Start simple and make it practical.

Key Steps

Assign an AI Lead

This may be a dentist, practice manager, privacy lead, or trained team member.

Create an Approved Tool List

Define which tools are allowed.

Define Allowed and Prohibited Uses

Be specific about acceptable use.

Set Review Levels

Match review requirements to risk level.

Document Your Approach

Keep it simple, practical, and usable.

Train the Entire Team

Consistency matters more than complexity.

You do not need a complex policy.

You need clear rules your team can follow during a busy day.

Training for Dental Teams

Safe AI use requires structured training.

Myla’s AI Essentials training for dental teams covers:

- AI risks and hallucinations

- Privacy and compliance

- Review and approval workflows

- Documentation expectations

👉 Learn how to safely use AI in your dental practice:

https://mylatraining.com/training

FAQ: AI Supervision in Dental Practice

Does every AI output need dentist approval?

No. Low-risk tasks may only require basic review. Higher-risk content needs clear approval.

Who should supervise AI use?

Responsibility should be assigned—often to the dentist, practice manager, or privacy lead.

Should AI-generated content be documented?

Yes, especially when it affects patient communication, claims, policies, or compliance.

Can staff use AI independently?

Only within defined rules, approved tools, and established review processes.

What is the simplest supervision rule?

Treat AI output as a draft. Check it, approve it, then use it.

Summary

AI can improve efficiency in dental practice—but it must be supervised.

The safest practices:

- Do not blindly trust AI

- Do not avoid it entirely

- Build simple, consistent workflows

Use AI as support—but keep people responsible.

Next in the Series

AI in the Dental Practice: What It Changes

Learn where AI can help—and where it should not be used.

About the Author

Anne Genge is the founder of Myla Training Corp, a Canadian provider of AI, privacy, and cybersecurity training for dental teams.

She is a national speaker and educator who helps dental teams use technology safely, protect patient information, and build practical systems that work in real clinical environments.

Learn More. Worry Less. Stay Safe.™

References

- National Institute of Standards and Technology (NIST).

AI Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework - Office of the Privacy Commissioner of Canada.

Privacy Guidance for Generative AI.

https://www.priv.gc.ca/en/privacy-topics/technology/artificial-intelligence/ - Canadian Centre for Cyber Security.

Generative Artificial Intelligence (AI).

https://www.cyber.gc.ca/en/guidance/generative-artificial-intelligence-ai-itsap00041 - Health Canada.

Pre-market Guidance for Machine Learning-Enabled Medical Devices.

https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/application-information/guidance-documents/pre-market-guidance-machine-learning-enabled-medical-devices.html - Office of the Privacy Commissioner of Canada.

PIPEDA Fair Information Principles.

https://www.priv.gc.ca/en/privacy-topics/privacy-laws-in-canada/the-personal-information-protection-and-electronic-documents-act-pipeda/p_principle/

Train Your Team to Spot AI Risks Today